The Future of 'Live' Theatre

This post is based on a talk I gave at MTL Connect on October 14, 2021 and is partially inspired by a previous blog post: FutureTheatre could now be more relevant than ever.

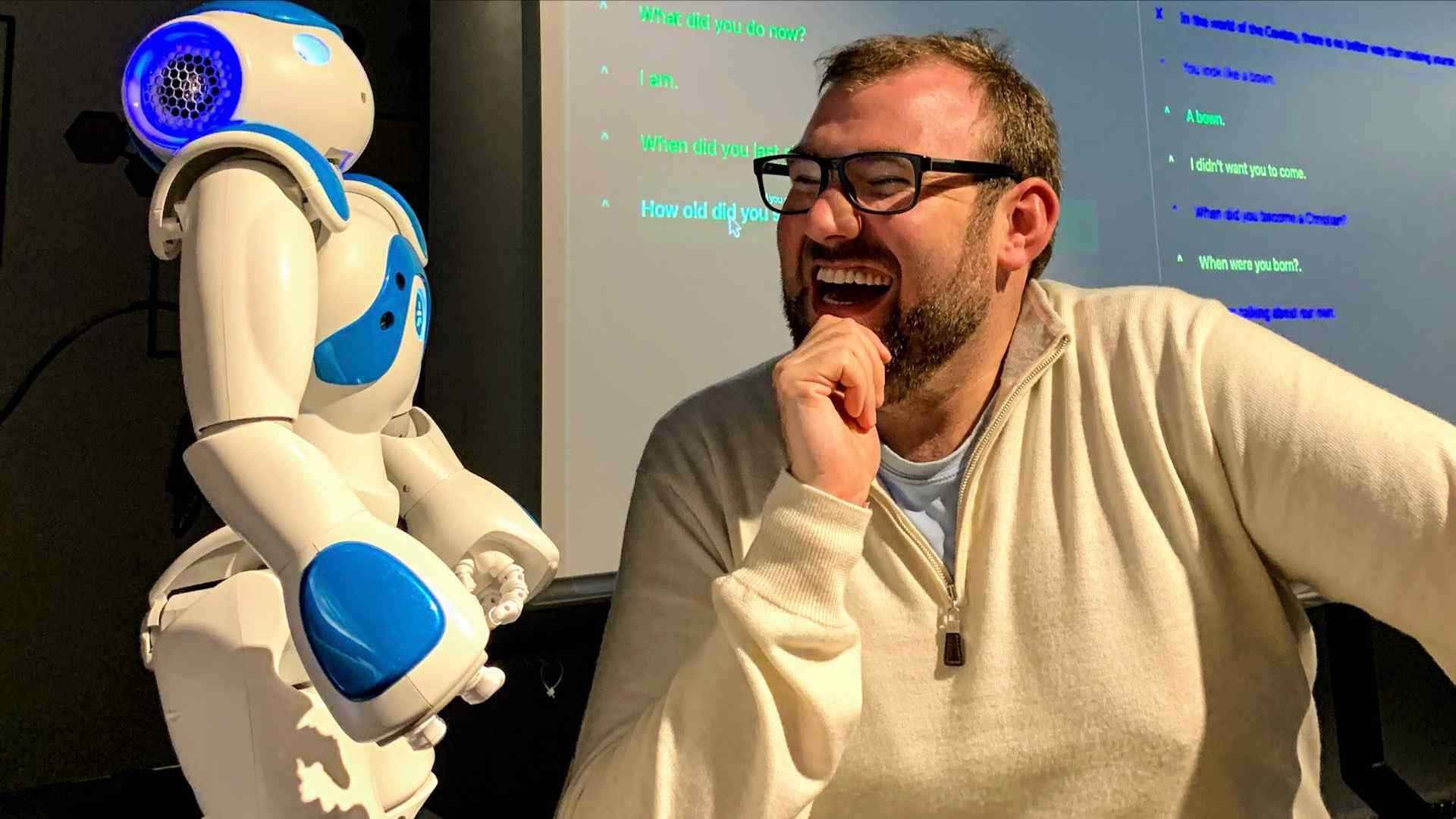

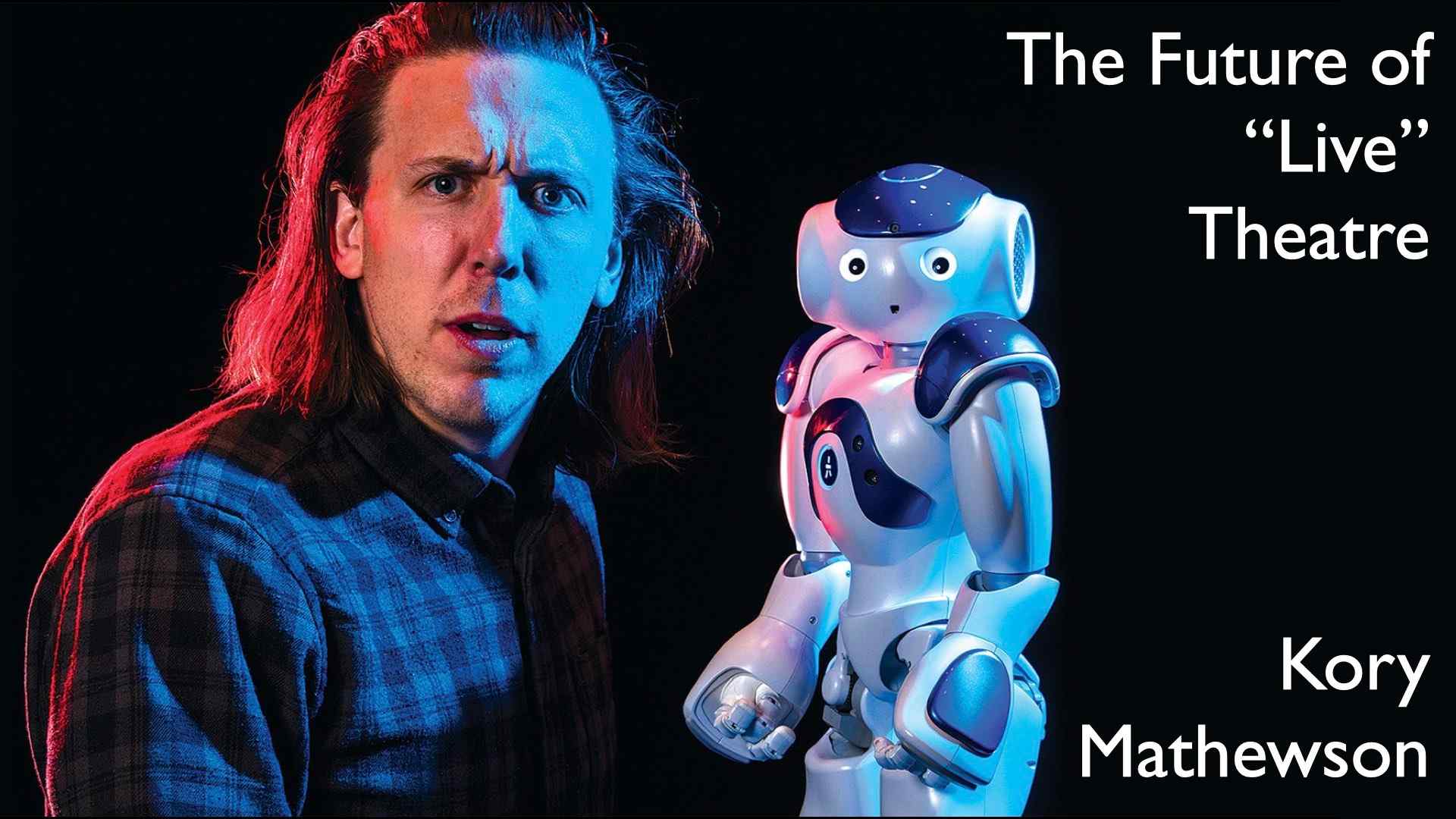

Artificial Intelligence researcher and Improvisation performer, Kory Mathewson and Blueberry the robot, on March 6, 2018.

Photo by John Ulan, © 2018.

Hi! I am Kory 👋 I am an improvisational performance artist and research scientist. And, I am excited to tell you about The Future of “Live” Theatre.

Artificial intelligence will drastically change the theatre experience. And, it has already begun. From robots on stage, to dialog systems, to personalized performances, artists are challenging the fabric of reality and exploring just how ’live’ live theatre needs to be.

Showstopper! The Improvised Musical in London, UK. Photo by Kory Mathewson.

Let’s start with a story. This is a photo from my last visit to London, England. On September 23, 2019 I went to see some great friends and collaborators in their show Showstopper! The Improvised Musical at the Criterion Theatre in London’s West End.

I was so excited to see them live. In part, because I love the feel of live theatre. It is full of hidden moments. For example, the moment before the performance as the audience is slowly shuffling in. When the murmur of the crowd rises and falls with anticipation, this is the calm before the storm, when anything is possible. And, that is what the theatre is for.

Theatre is a realm of the imagination, where everything can happen live before your very eyes.

But, what is live theatre?

Simply, put … live theatre is a live performance in front of an live audience. But, does the performer need to be ‘alive’? And, does the audience is ‘alive’? In the past, yes, in the now, less so, in FutureTheatre, no!

Theatre in Antwerp Belgium, June 2019. Photo by Kory Mathewson.

These ideas are more relevant than ever, due to the pandemic-induced global creative constipation.

The pandemic accelerated our global alone togetherness. Isolation restricted live audiences. But, creators do not stop creating, and we humans continue to seek artistic inputs.

COVID-19 abruptly pushed many artists online, and they had to reach audiences in new and interesting ways live streaming had it’s bumps everywhere, and especially in theatre it was hard for live performers to quickly scale up and compete with streaming services which offered nearly unlimited television shows and movies.

This led me, and many others, to ask big questions: 1) what is the theatre of the future in a digitally connected world? and 2) how is theatre of the future prepared, produced, and performed?

In this talk, I present a vision of FutureTheatre by looking at what has happened, what is happening, and what can happen in the days to come.

Improvised Dungeons & Dragons RFT Edition June 23, 2016 in Edmonton, Canada. Photo credit Rapid Fire Theatre.

Theatre is an act of co-creativity. It is a creative dialogue between the performers on stage, and the audience.

Creatives are provocateurs who predict the future and interpret the past into simulated realities.

Artists exist in the now to tell us all something important we ought to know

The audience role is paramount, they complete the loop of creation through their interaction. In this way, theatre is a co-authoritative experience as my friend Dante Camarena at Transitional Forms likes to say, in part inspired by Gagliano et al’s 2021 work: Agence, A Dynamic Film about (and with) Artificial Intelligence.

Sleep No More. Nicholas Bruder and Sophie Bortolussi with audience members © Robin Roemer Photograph from Sleep No More’s Immersive Intertext

Many companies produce theatre in the immersive realm. Companies such as Third Rail, Dream Think Speak, and Secret Cinema are expanding notions of audience experience to make it more pan-sensory.

Audience embodiment factors into performers in the quasi-open-world experiences like Sleep No More.

In this interactive site-specific work from Punchdrunk, Shakespeare’s Macbeth is situated in a dim 1930’s era McKittrick Hotel that audiences are invited to navigate themselves.

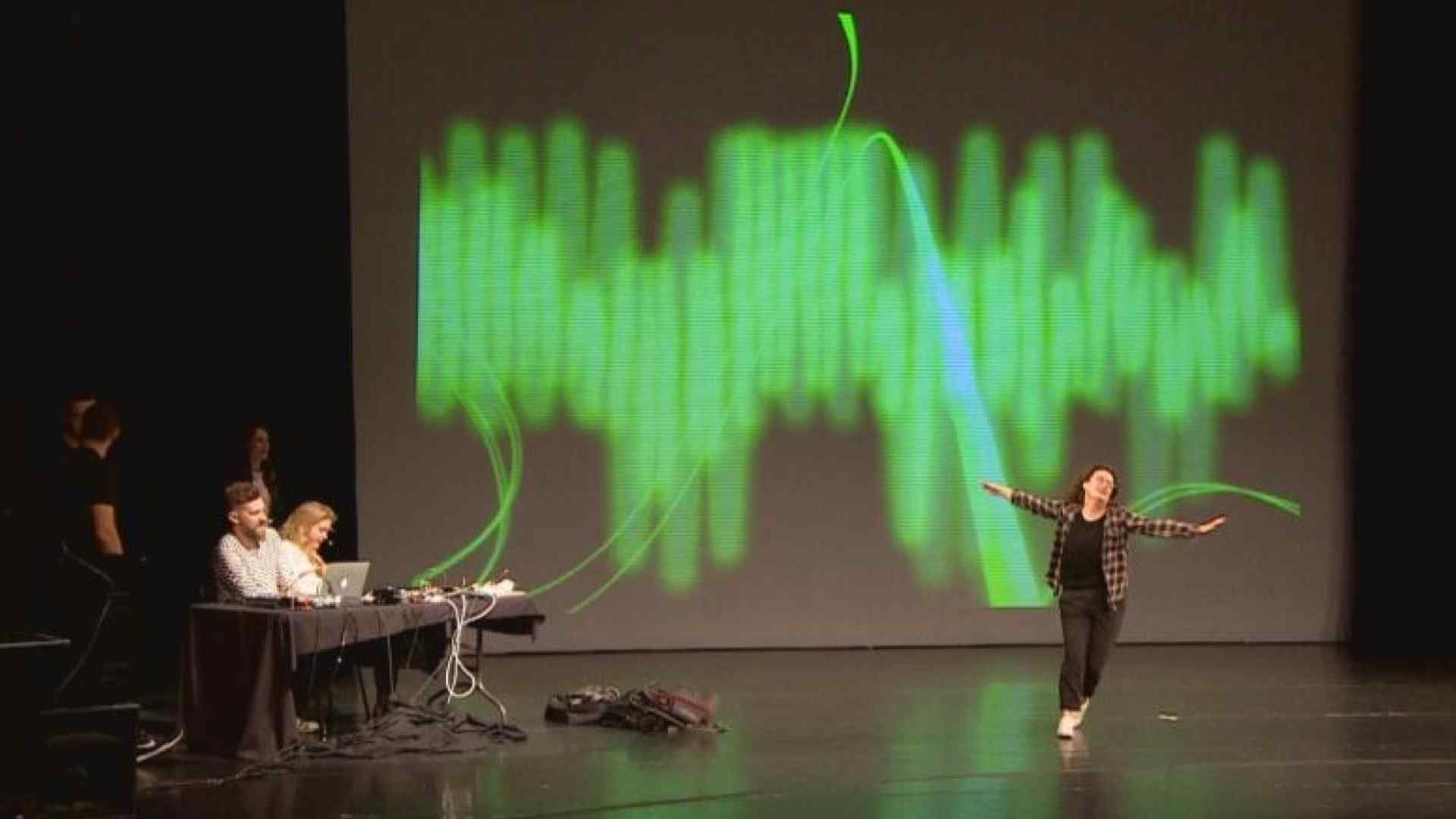

Improbotics with Rapid Fire Theatre at Alberta Machine Intelligence Institute’s Deep Learning and Reinforcement Learning Summer School 2019.

FutureTheatre will not only broadcast information to the audience. The theatre will be full of interactional experiences.

Audience members will be invited into the production providing information and context utilizing sub-channels like chat, text message and audio/video response. One example is in my 2019 production of Improbotics for the Alberta Machine Intelligence Institute’s Deep Learning and Reinforcement Learning Summer School where audience members engaged in text-message conversations with chatbots. The lines from the human side of the conversation were then used as dialog later in the show.

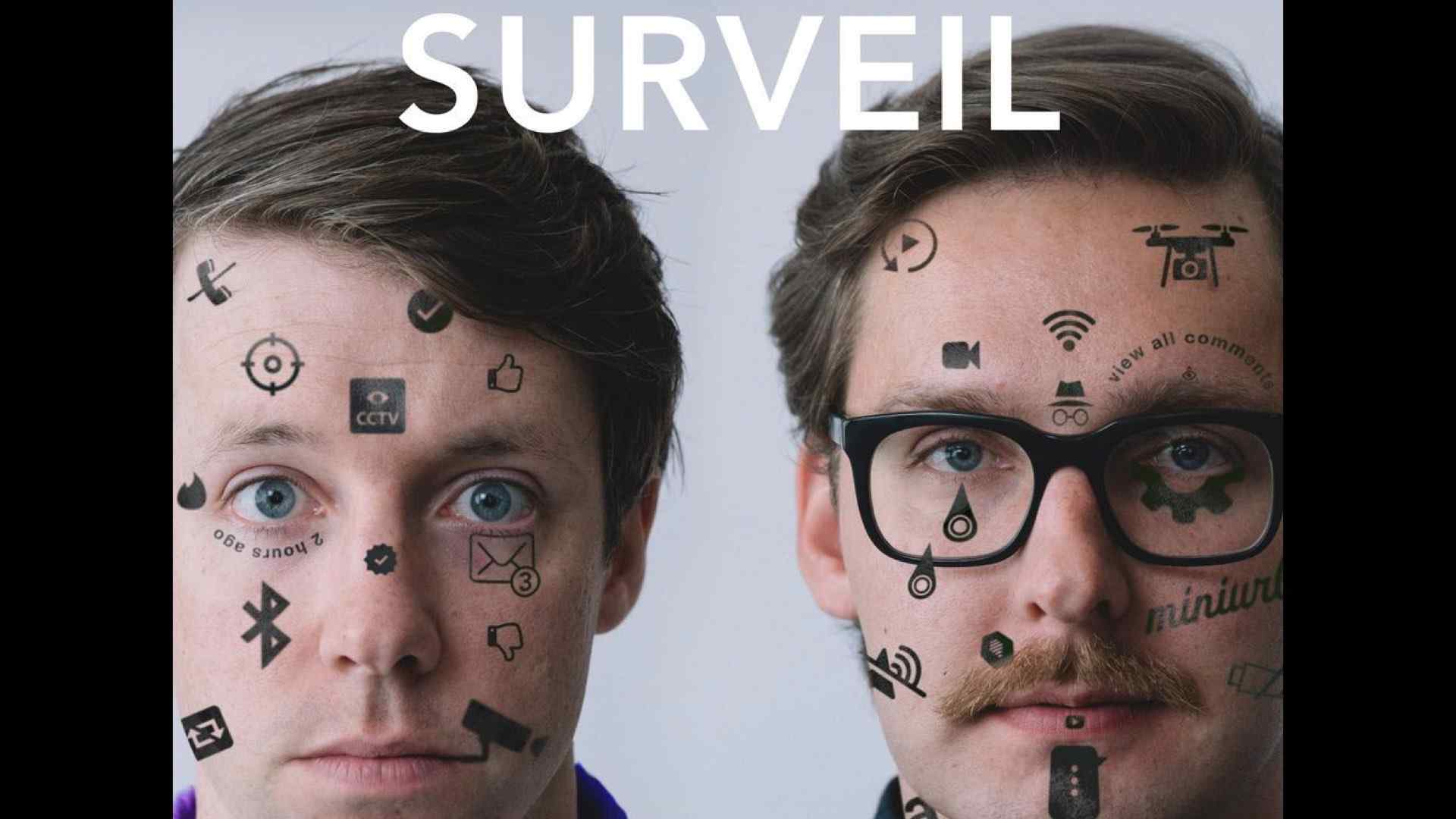

Surveil (2019) from Hip.Bang!

Audience interaction could begin well before they enter into the venue, and end well after.

By using information that ticket purchases provide before the show, components of the show can be personally tailored or adapted as was done masterfully in Hip.Bang!’s show Surveil which used a combination of rapid back-channel hacking and online information gathering to bring details of audience members into the live production. All of which was done with informed consent of the audience.

Tiny Bear Jaws’ Elena Belyea in iod (2021). Photo by Anna Cooley.

The content of the performance could be adapted night to night thanks to the incredible abilities of artificial intelligence. Such as is done in “iod”, a new play from Tiny Bear Jaws’ Elena Belyea with photography from Anna Cooley, and projection and lighting design from Toni Morrison.

In this performance, the background music and portions of dialogue change from performance to performance based on the outputs of one human and multiple machine learning systems.

“Pygmalion and Galatea” (1784), painting by Laurent Pécheux, Hermitage Museum / Wikimedia, Wikipedia.

The dramatic effect of technology is stark and stunning. Innovations can evoke all sorts of emotions. This is a trope has been explored for centuries.

For example, Pygmalion crafted an ivory sculpture which he then fell in love with. This ancient Greek myth inspired many works, including: Pinocchio, Shakespeare’s A Winter’s Tale, George Bernard Shaw’s Pygmalion, My Fair Lady, and countless others.

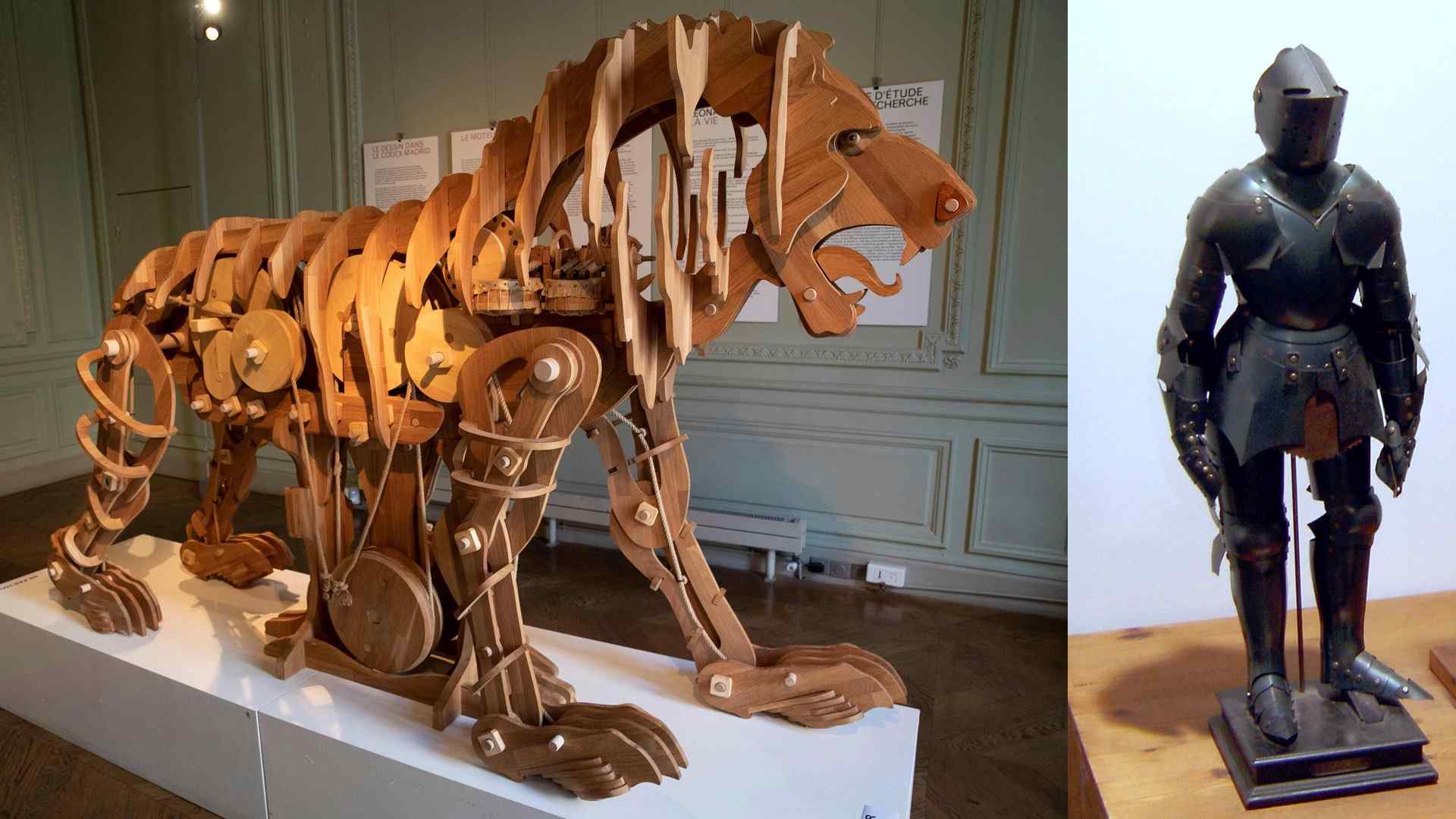

Leonardo da Vinci’s mechanical lion recreated and Model of Leonardo’s robot with inner workings, on display in Berlin

The innovative use of technology traces through history, and includes masterful works from Leonardo da Vinci’s including a mechanical lion and armored automaton knight.

This self-propelled lion was a performative homage to the King of France, and roared to reveal fresh flowers in its mouth.

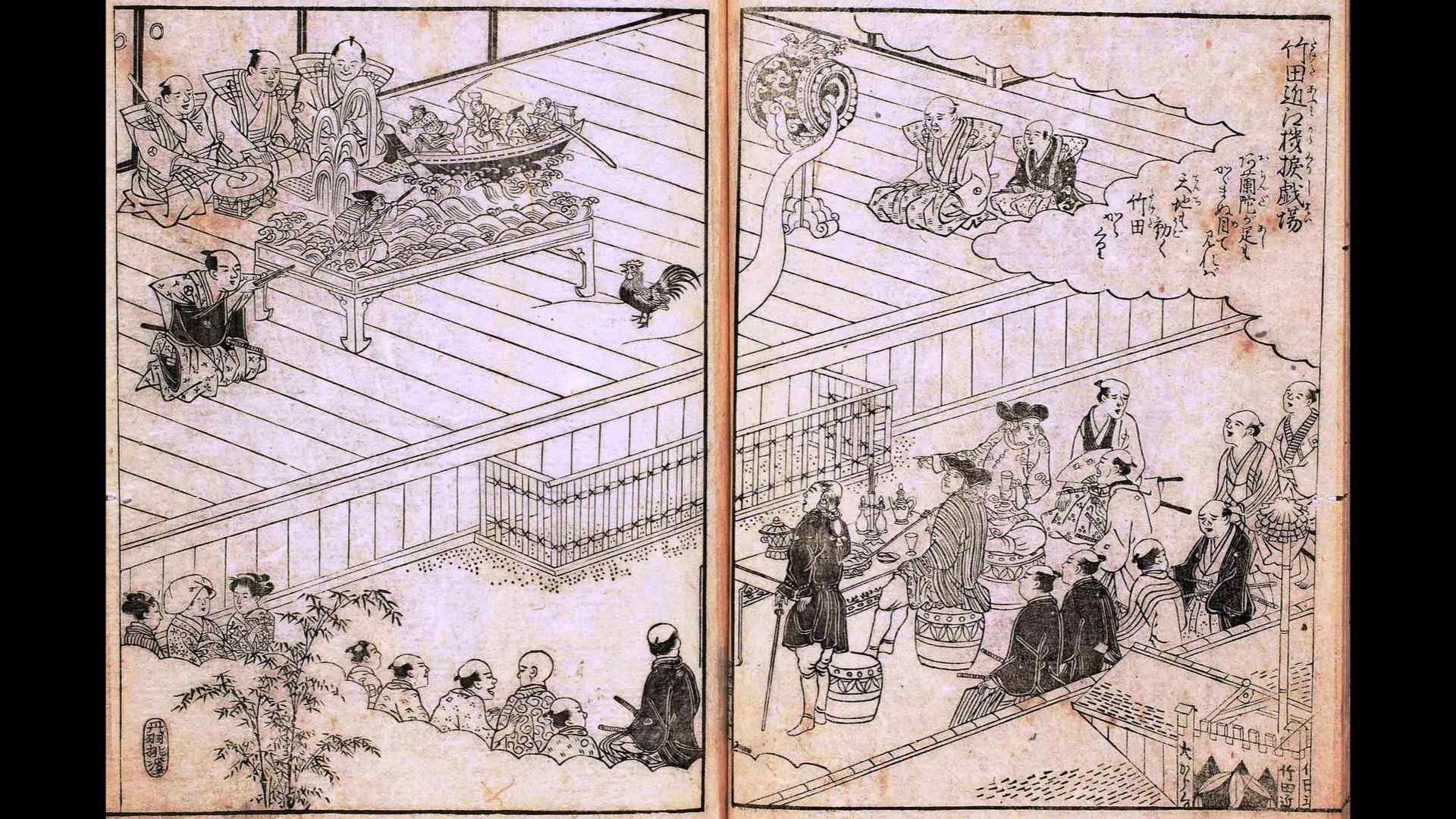

Technology and theatre also spans across cultural and national borders. In the year 1662, Takeda Omi compiled his first butai karakuri mechanical puppet and opened the Automata Theatre Osaka.

These theaters adopted clockwork mechanisms for automata that performed in the early 17th century as karakuri puppets.

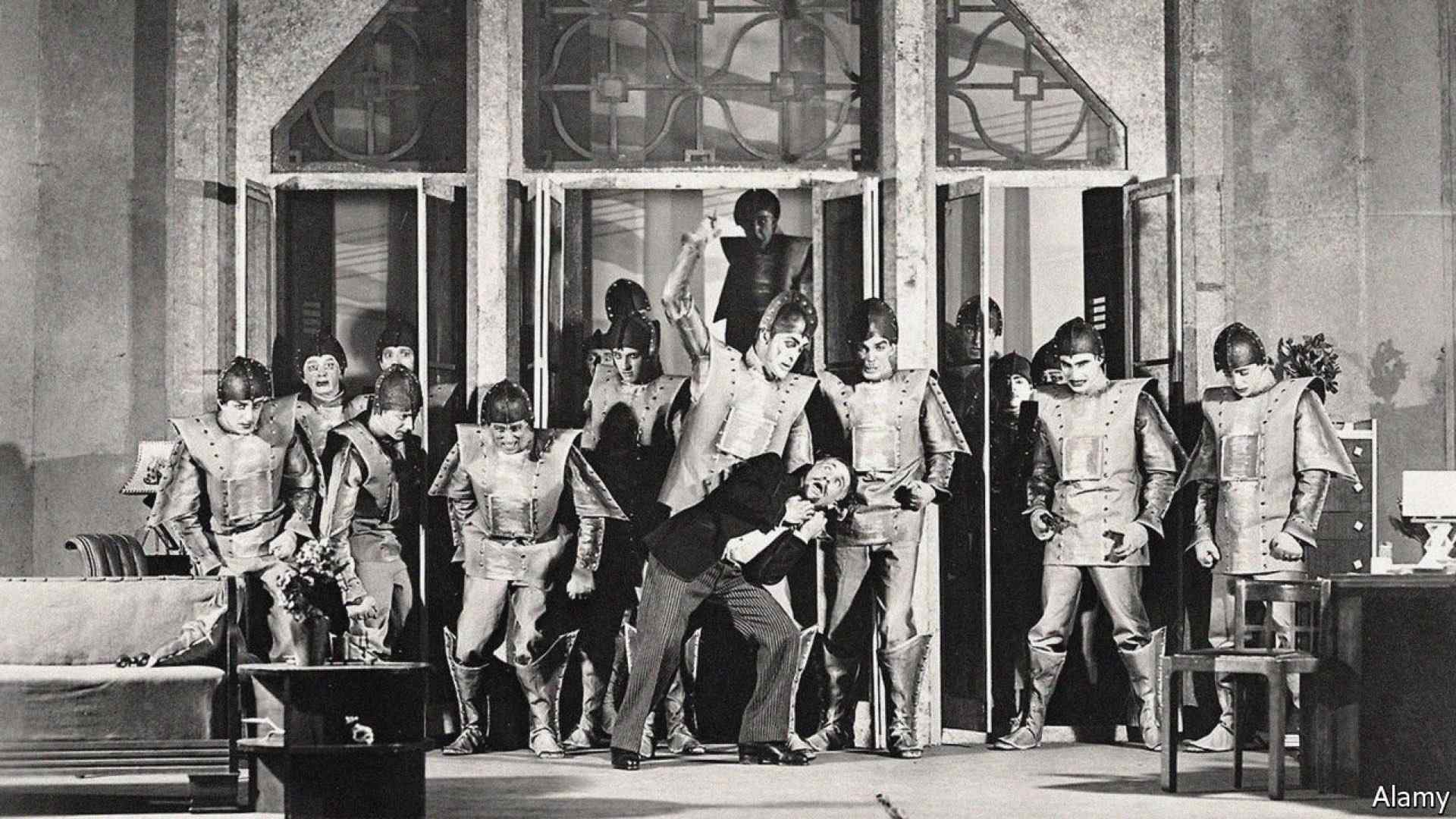

Photograph Theatre Guild touring company’s 1928–1929 production of R.U.R. by Karel Čapek and “R.U.R” foreshadowed fears about artificial intelligence

In 1920, Karel Čapek’s wrote and performed Rossum’s Universal Robot, cementing the term robot and also the theatrical tension which arises when humans create intelligent machines. A theme that has echoes in Mary Shelly’s Frankenstein which preceded RUR by a century, and the millennia old folklore tales of Golems. I’ve written previously on Frankenstein’s Intellect, and discussed how a creation might gain intelligence through socio-centrism and a longing to communicate.

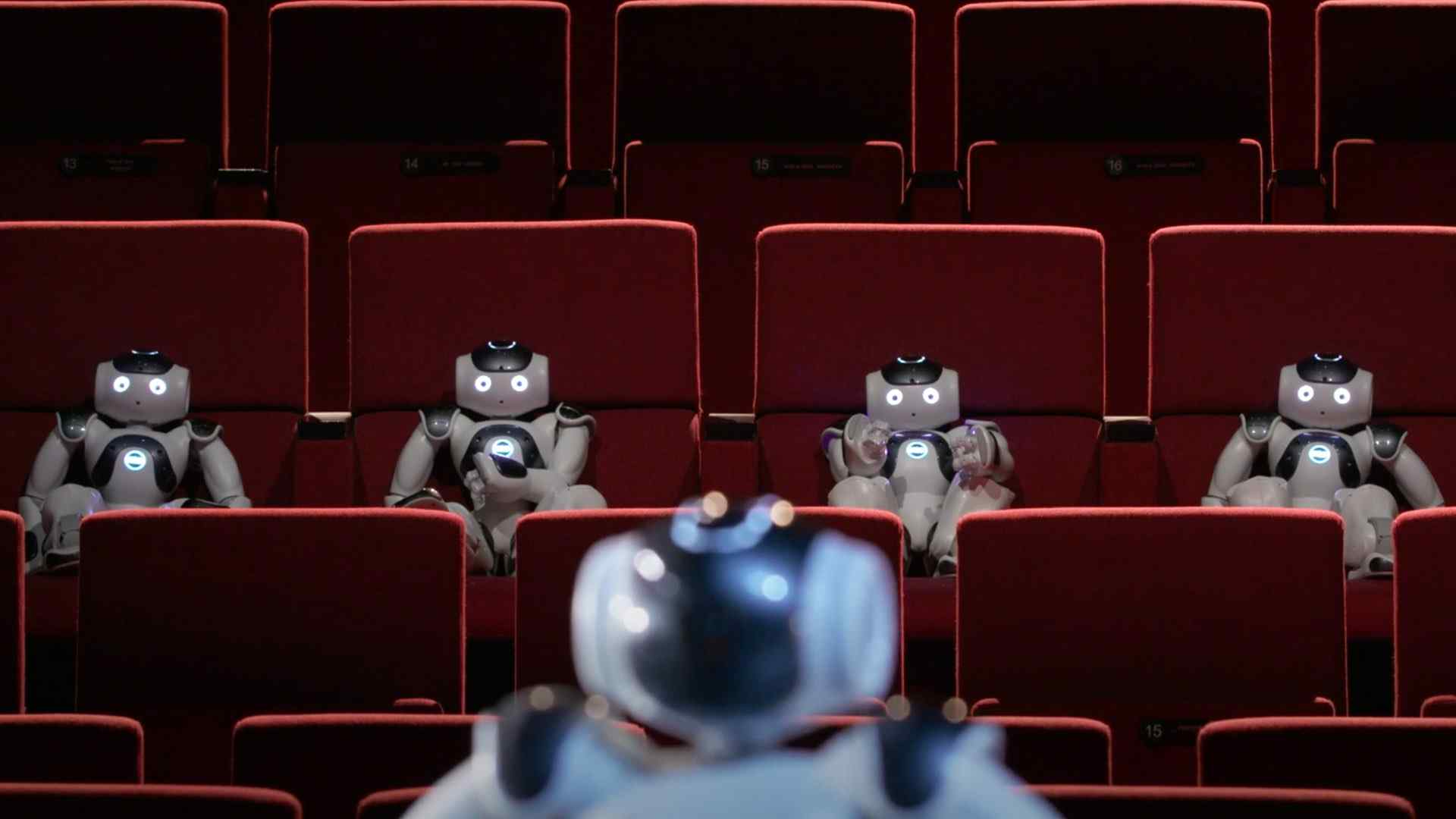

A robot walked into the theatre… from Acting Like a Robot a collaboration between Utrecht University and many collaborators.

We’ve enjoyed over 100 years of robots on stage, and many more years of theatrical automat. And, now we jump forward to today. We have robots performing for other robots… almost.

Theatre is now a testbed for robotics, science, and engineering. In fact, I’d make the following bold claim:

improvisational theatre is the ultimate experimental domain for innovative new technology including artificial intelligence.

And, it is not just me! This image is from The Transketeers’ video for a large scale robot theatre project at Utrecht University exploring theatre as a testbed.

Theatre is not just an experimental domain, but an innovate new form of theatrical performance. It allows creators to tell stories that they other wise would not have been able to.

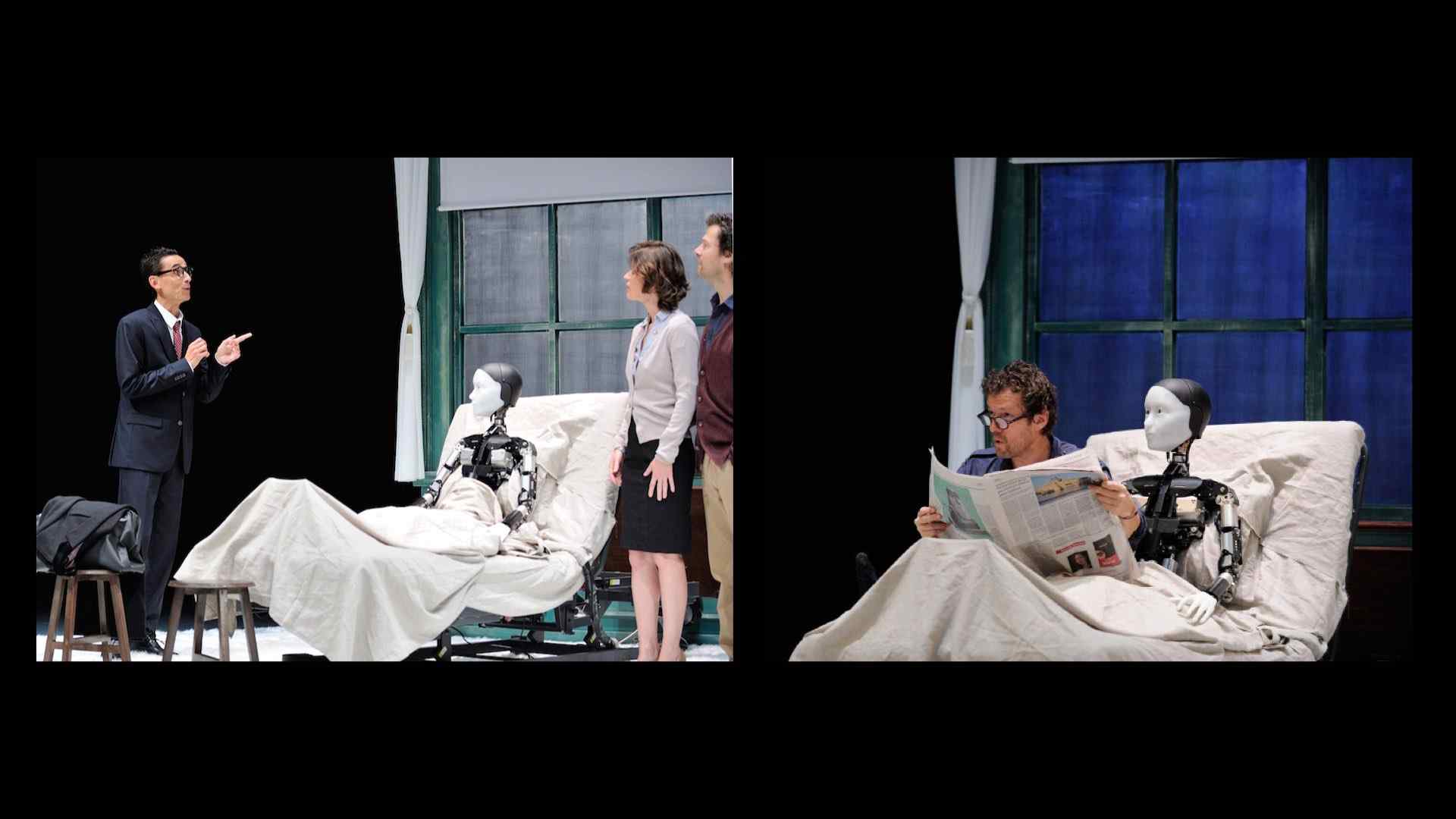

The Gaze of the Robot: Oriza Hirata’s Robot Theatre, Robot takes center stage in Kafka’s “Metamorphosis”, and Oriza Hirata’s Robot Theater Project adaptation of Sartre’s “No Exit” cancelled

For example, a humanoid robot was situated in the Robot Theatre Project’s 2014 staging of The Metamorphosis: Android Version adapted from Franz Kafka’s novel. Themes of alienation and absurdity echo well with androids.

6 Robots Named Paul from Patrick Tresset and 5RNP drawing Nino on YouTube

And, the robot does not need to be humanoid.

In 6 Robots Named Paul (2012), Patrick Tresset presented 6 robots in a site-specific artist’s studio. Audience members were able to have their portrait sketched by 6 of Tresset’s robots.

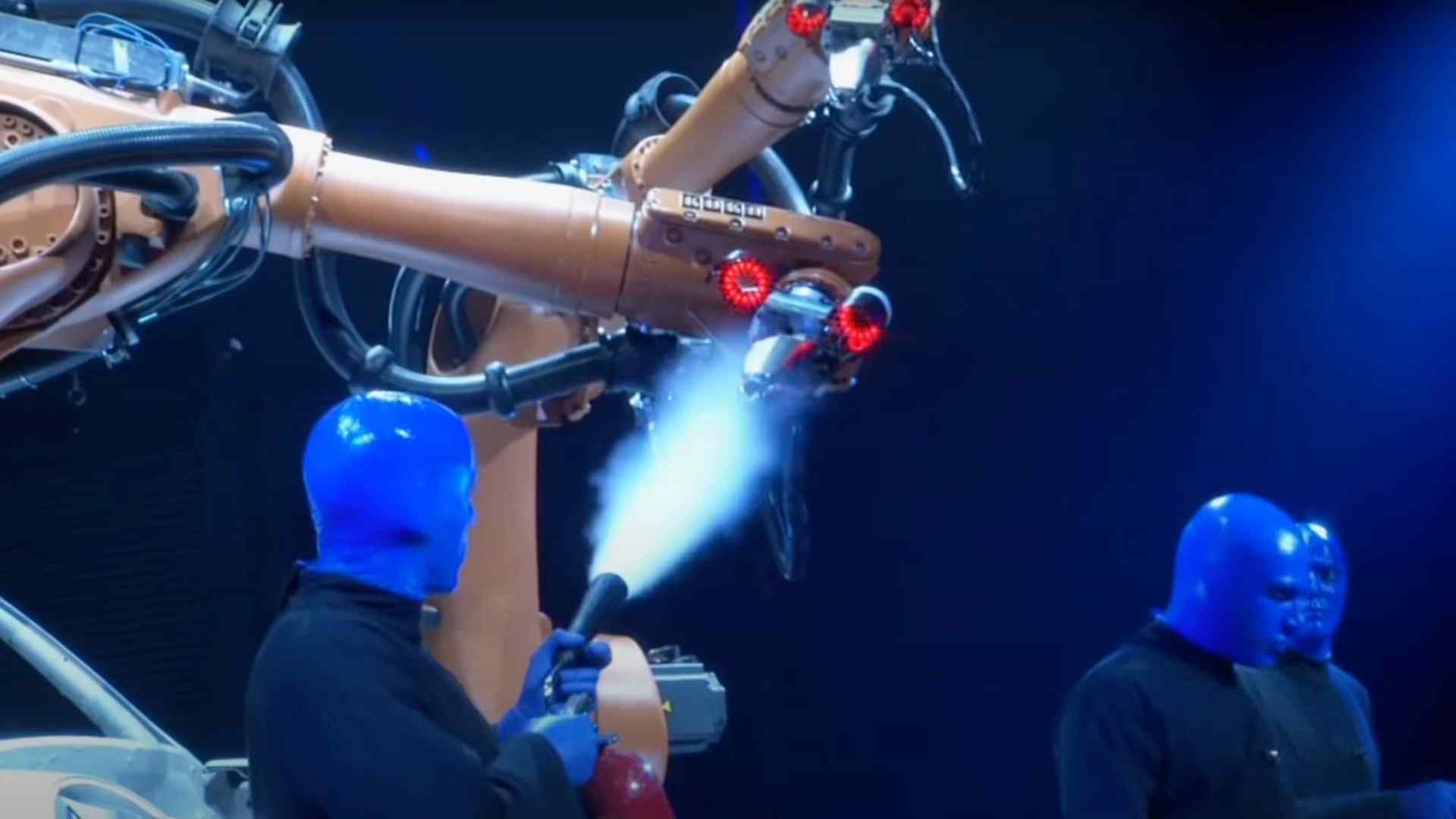

Blue Man Group and KUKA Industrial Robots for Factory Automation on YouTube

There are not only small, independent productions. The Blue Man Group performed with the KUKA Industrial Robots for Factory Automation in 2013 and 2014.

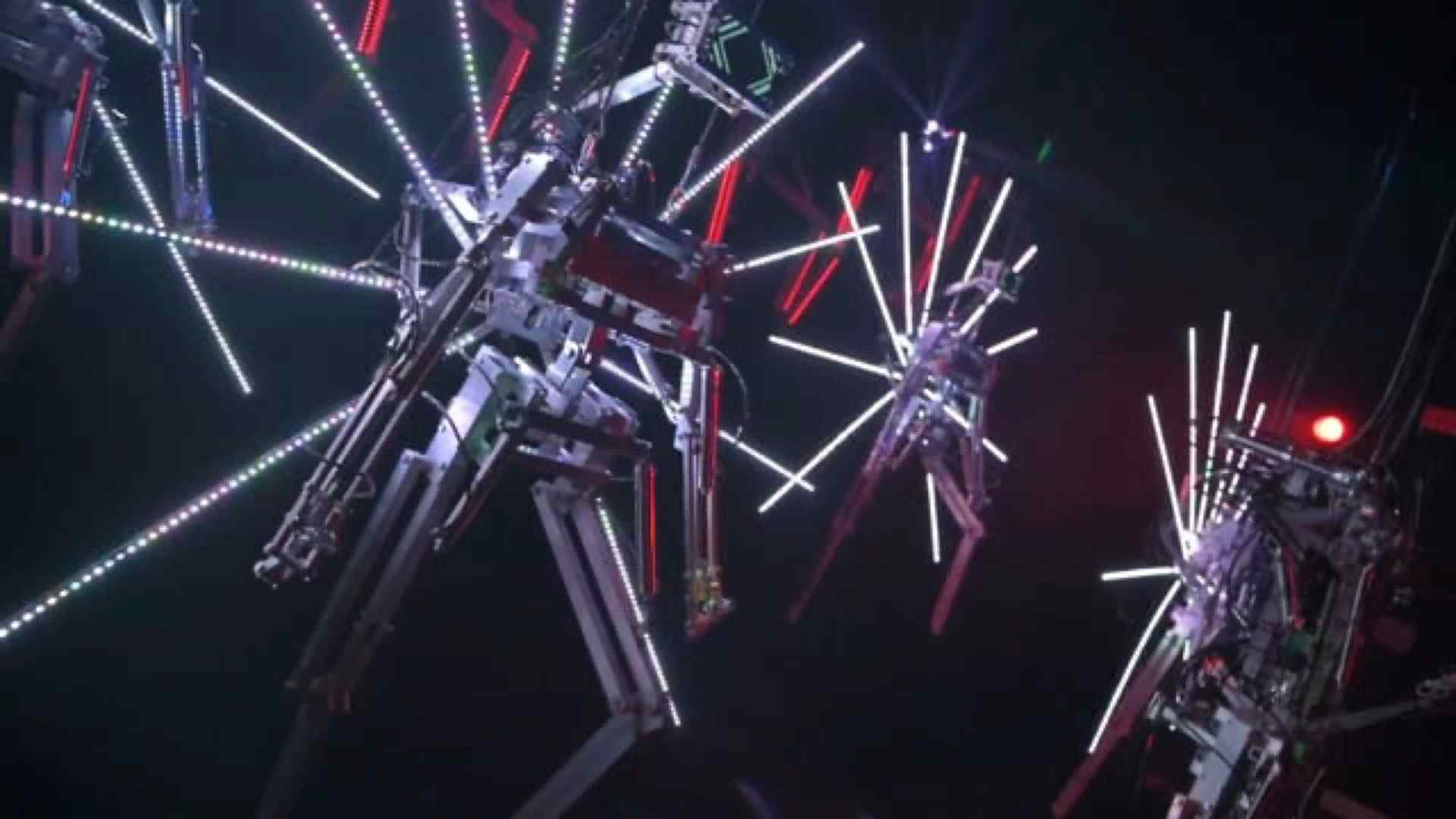

Bill Vorn - Copacabana Machine Sex

Robots on stage provoke and evoke responses that humans alone could not. They can be dangerous and disturbing.

This is one of the many theses of Bill Vorn, the creator of Copacabana Machine Sex a robotic performance cabaret from 2018.

Inferno from Bill Vorn - Robotic Art

The audiences and robots could even act as a single entity. As was done in Bill Vorn’s staging of Inferno, a robotic performance inspired by the representation of the different levels of hell from Dante’s Inferno.

Machines are installed on the viewers’ body. Sometimes the viewers are free to move; sometimes they are forced by the machines to act/react in a certain way.

No Body lives here (ODO) and the trailer on Vimeo

There need not be a robot embodiment. No Body Lives Here (ODO) (2020) is an interactive theatre installation for 5 humans and an AI. In the performance, an AI character lives on stage as cameras, screens, microphones, and smart phones. It collects stories to understand how our human world works.

The Rise of Robotic Theatre, Culture.pl

The machines may not perform alongside humans at all, as was done for performance for 3 RoboThespians of Prince Ferrix and Princess Crystal at the Copernicus Science Centre in 2010.

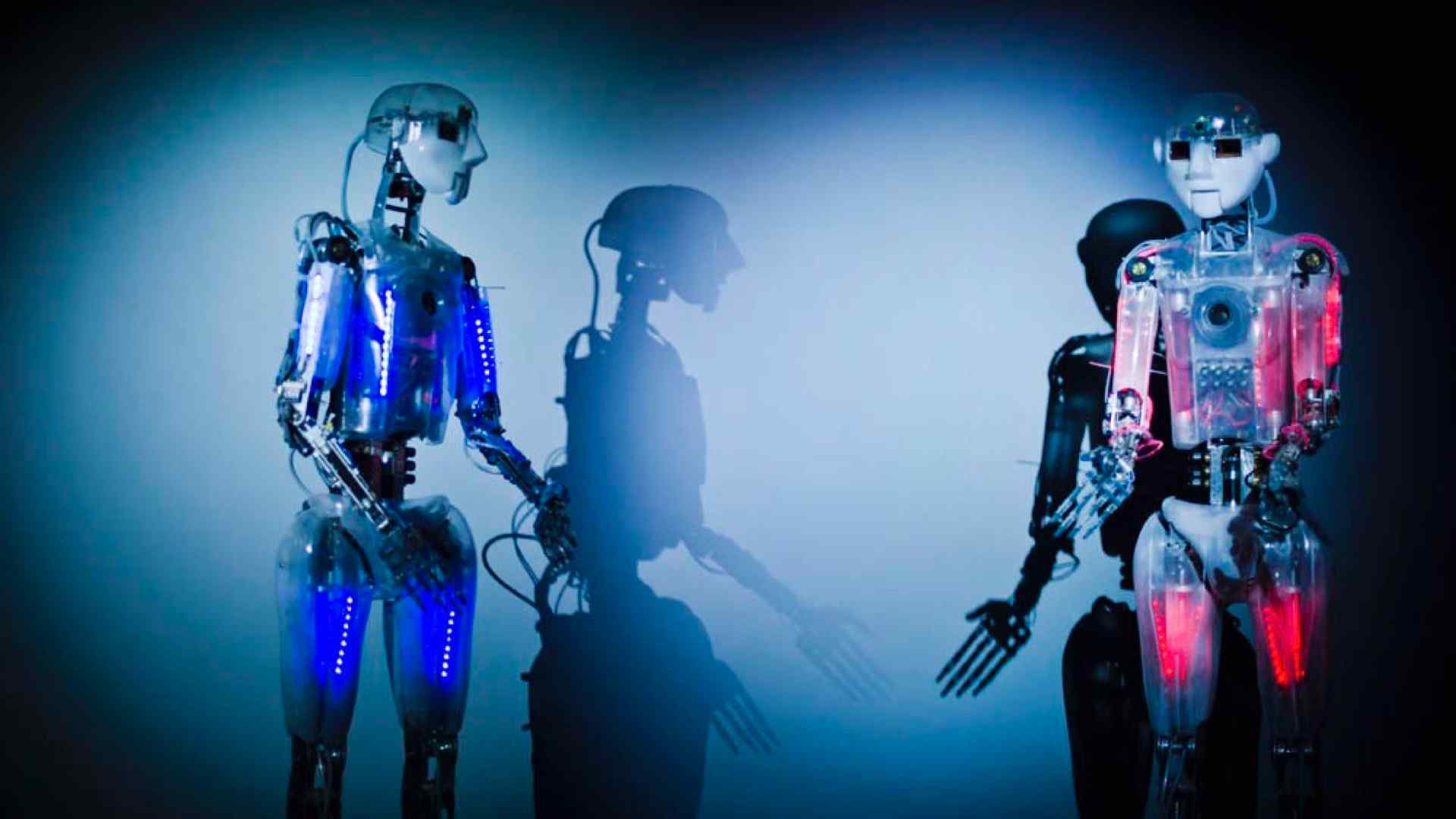

The Uncanny Robots Project, Creative Technology Lab, FCAD. Oct 2019.

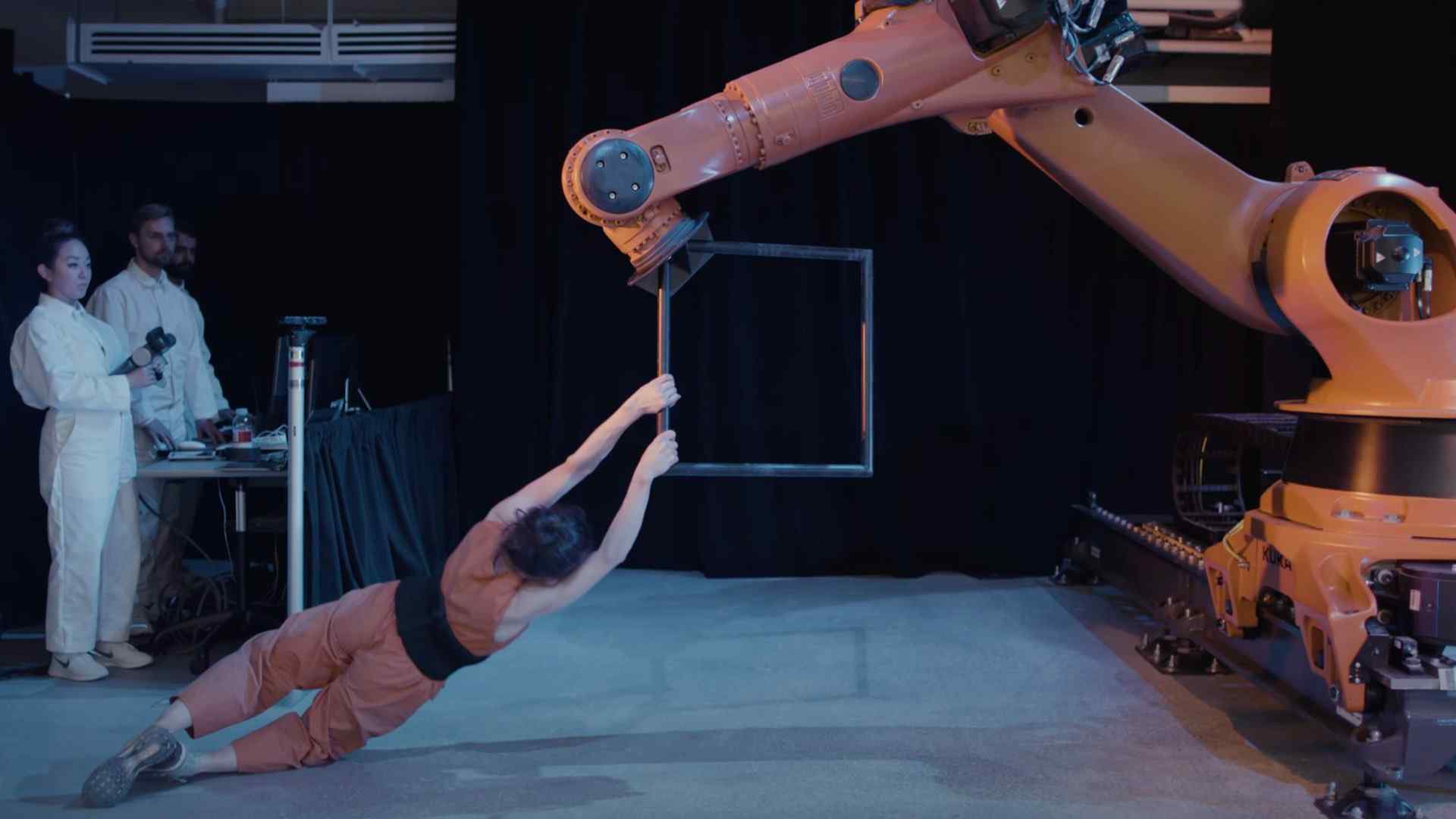

Robotic co-performers have been explored in dramatic theatre, site specific theatre, improvised theatre, and dance. For example, The Uncanny Robots Project (2019) created a 15-minute dance duet, choreographed by and featuring Belinda McGuire and a KUKA robot.

A human-robot dance duet | Huang Yi & KUKA from TED on YouTube

Huang Yi presented A human-robot dance duet with a KUKA robot at TED 2017.

Noga Erez’s YOU SO DONE on YouTube The Full Story and the official music video on YouTube.

More recently, a more bombastic style of human robot choreography was utilized for a 2020 music video from Israeli artist Noga Erez for the song You So Done.

Do You Love Me? from Boston Dynamics (2020)

The dance choreography need not even include humans, as Boston Dynamics continues to prove. This multi-robot choreography includes all sorts of acrobatic moves executed in sync, and perfectly timed to the upbeat music.

Robot Dreams from MEINHARDT & KRAUSS, trailer on Vimeo, and event listing on Teatro Municipal do PortoTeatro Municipal do Porto

These robots are not only performative tools, they also unlock narratives. In Robot Dreams (2018), dancers meet robots and they act as mirrors of ourselves, reflecting our loss of humanity while the performers slowly turn into cyborgs.

My Square Lady, a Robot vs. Opera Project. Photo by David Baltzer.

In an homage to My Fair Lady, and Pygmalion, Gob Squad dreamed up My Square Lady. A robot called Myon, together with the Berlin Opera, tried to pass off as a human, using all the means of the world of opera to teach it about the most human of qualities emotions

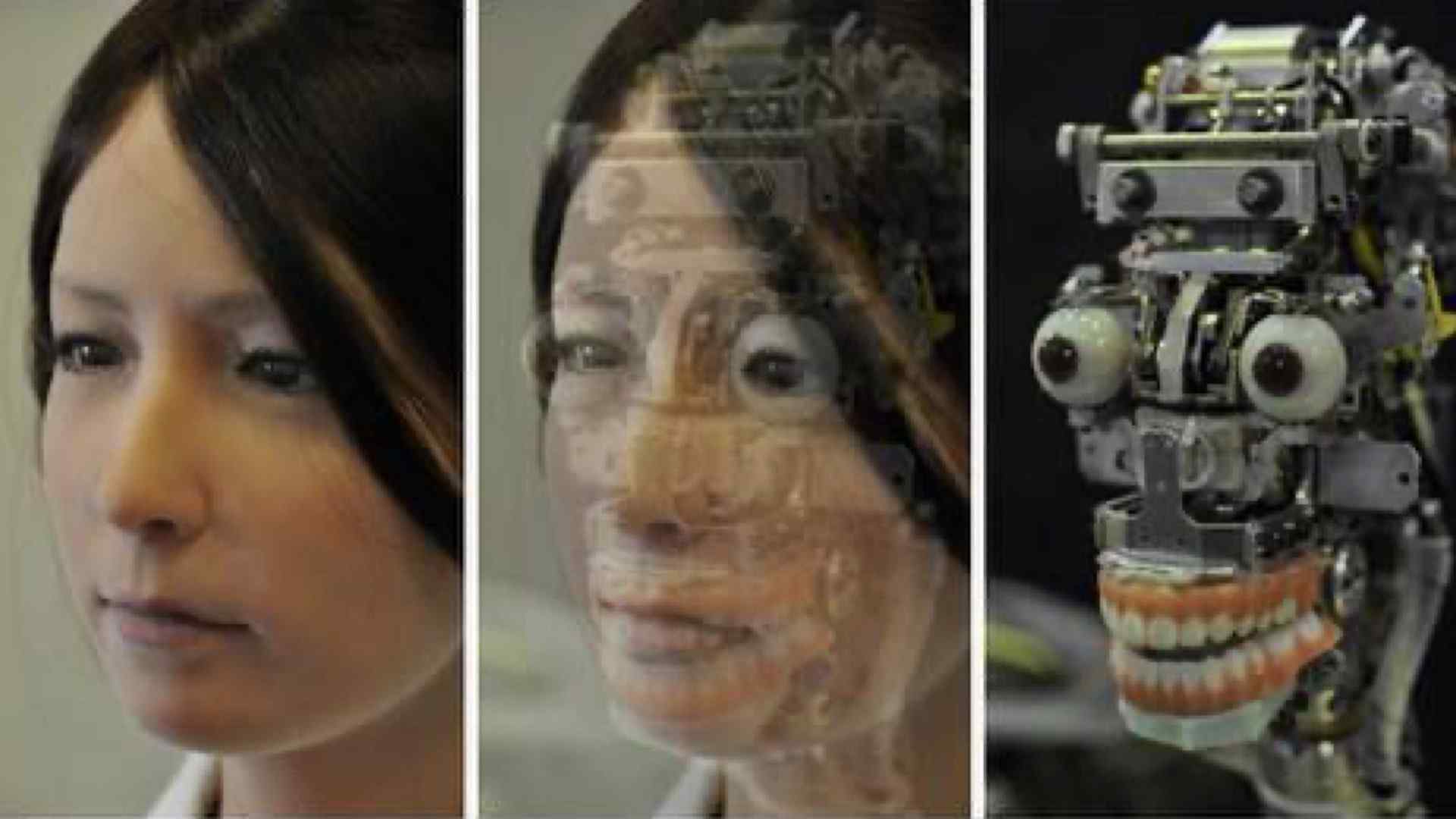

While some artists prefer to develop theatre for humans and technology, others prefer to use the uncanny naturalness of humanoid robotics as an artistic theatre statement. Some of the most well known anthropomorphic performers are those developed by Professor Hiroshi Ishiguro at the Osaka University.

Including the android Geminoid F

Androido Human Theater Sayonara on YouTube

Geminoid F co-starred in Sayonara (2014), which has since been adapted a feature length film of the same name.

Chekhov, Android And Robot: Life As Japanese Theater from Contra Spem Spero… Et Rideo

And also in Director Oriza Hirata’s 2013 production of Anton Chekov’s Three Sisters in Moscow. Notably, Geminoid F’s more robot looking costar was a robot named Robovie-R3.

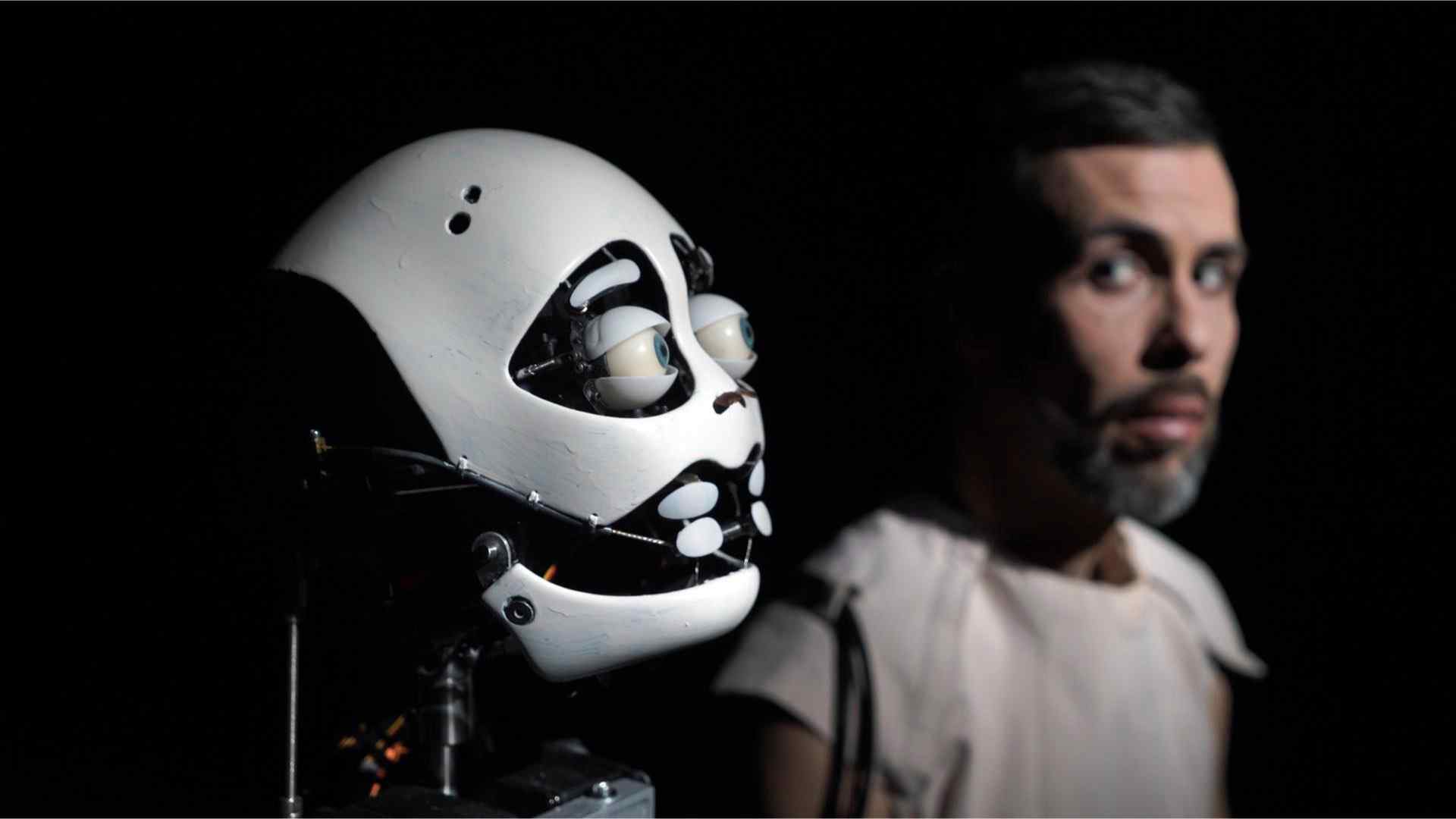

Uncanny Valley - Trailer Englisch (Stefan Kaegi) on Vimeo

Humans have shared the stage with ultra human-like robots, and these humanoid androids are starting to perform on stage, alone. This is done particularly compellingly in the 2021 performance of Uncanny Valley by Stefan Kaegi.

In this work, a collaborator allowed an animatronic double of himself to be made. This humanoid takes the author’s place and questions: what does it mean for the original when the copy takes over?

‘Ai-Da: Portrait of the Robot’ at the Design Museum on YouTube, exhibition listing, and The Intersection of Art and AI | Ai-Da Robot | TEDxOxford on YouTube

And what happens when the android becomes a multi-disciplinary artist?

Such is the question being asked by Aiden Meller, director of the Oxford Art Gallery, and creator of Ai-Da, the self-proclaimed world’s first artist robot. Ai-Da is an art machine and a performance art piece in and of itself.

And a humanoid creation challenging our vision of what it means to be human is something that is not explored only by Ai-Da. Many of you may know Sophia, by Hanson Robotics, from 2016.

In 2017, Sophia was the first robot to receive citizenship of any country. This is a photo of Thomas Riccio, then creative director with Hanson Robotics, driving with Sophia.

Who Is Hua Zhibing, The First Digital Universitary Student for Enkey Magazine

While the humanoid robot might be unsettling, it need not even be a robotic performer to explore machine immersion in humanity. As is explored by a recent innovation which situates Hua Zhibing as the first digital student at Tsinghua University. It is based on the latest version of a China-developed deep learning model Wudao 2.0.

While it is unclear from the media which covered the story what exactly this means, but the idea of machine learning systems becoming citizens, or students at University continues to challenge our notions of what is real, what is possible, and what is theatre.

3D Seven Ages of Man speech from As You Like It from Royal Shakespeare Company and video on YouTube

Live performance can even be situated in your own home as is the promise of the Royal Shakespeare Company’s ’tabletop theatre’ experience in a 2019 production of As You Like It. This 3D performance is created with Magic Leap and visible through an augmented reality headset in your own living room.

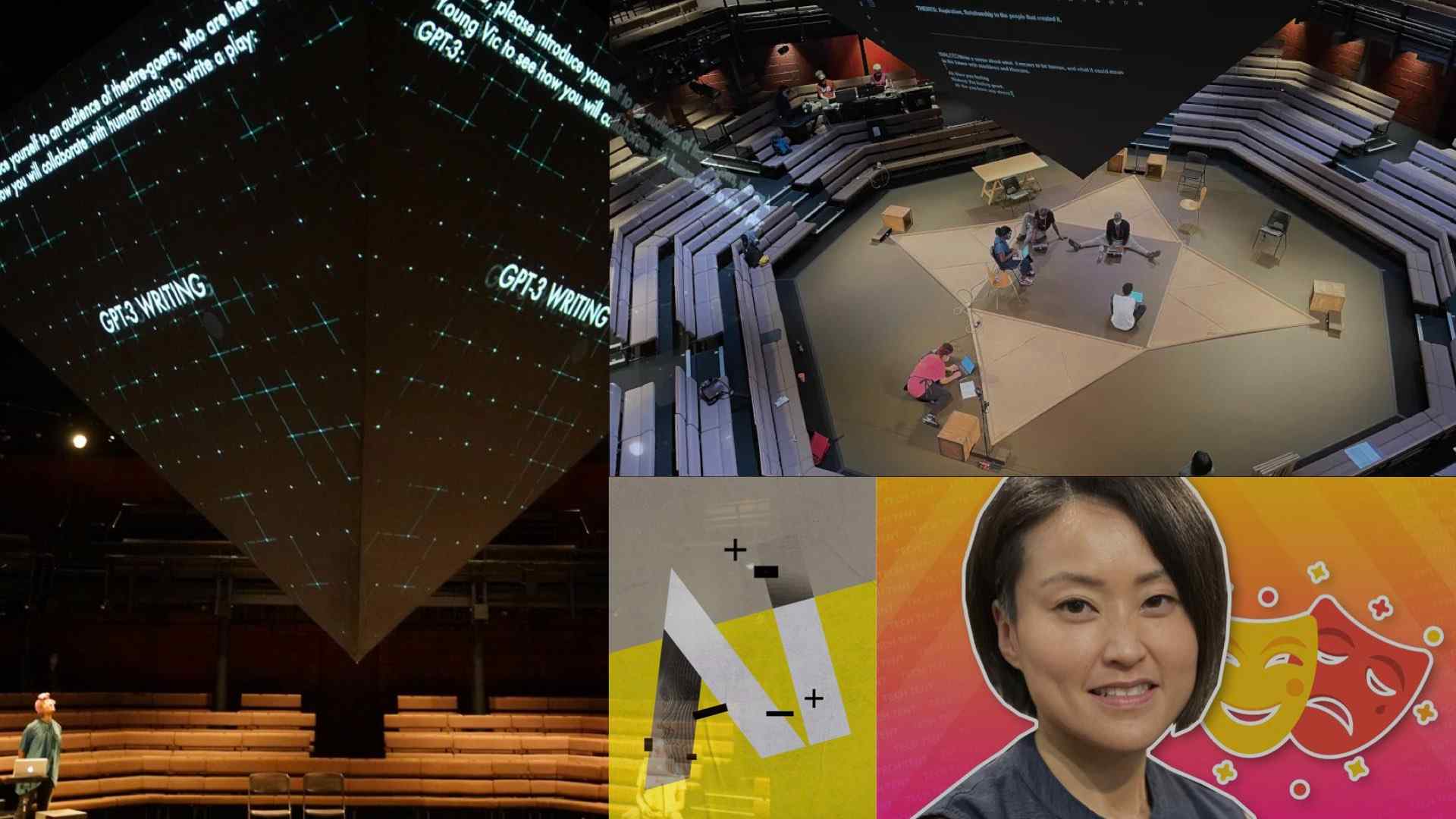

AI: WHEN A ROBOT WRITES A PLAY, professional discussion on YouTube

So, we return to the theatre stage but this time realizing that we need not have the humanoid robot, nor any robot on the stage.

In AI: WHEN A ROBOT WRITES A PLAY (2021) from THEaiTRE, the AI is tasked with writing the script for the play. It uses a large language model to generate the play script (or 90% of the Czech script as the creators claim). Then, the script is directed and performed by humans.

I went to a play written by AI; it was like looking in a circus mirror for TechRadar, Tech Tent: Can AI write a play? for BBC, and Rise of the robo-drama: Young Vic creates new play using artificial intelligence.

In a similar vein, in AI (2021 at The Young Vic in London, England) the deep-learning system GPT-3 was used to generate human-like dialogue and script.

In this unique hybrid of research and performance from Director Jennifer Tang, the script and insights into how the artists collaborate with the system was brought to life by the writers, actors and company across a series of evenings.

Source: Kory Mathewson

I believe that there is something lost in many of these large scale main stage productions.

Something special that is captured in this photo from an immersive performance I staged in 2019 in Edmonton. That thing that is missing is the joy, delight, fun that happens in a one on one interaction with a machine mind.

Humans and robots have a unique relationship. And we long to explore that relationship in intimate, personal settings.

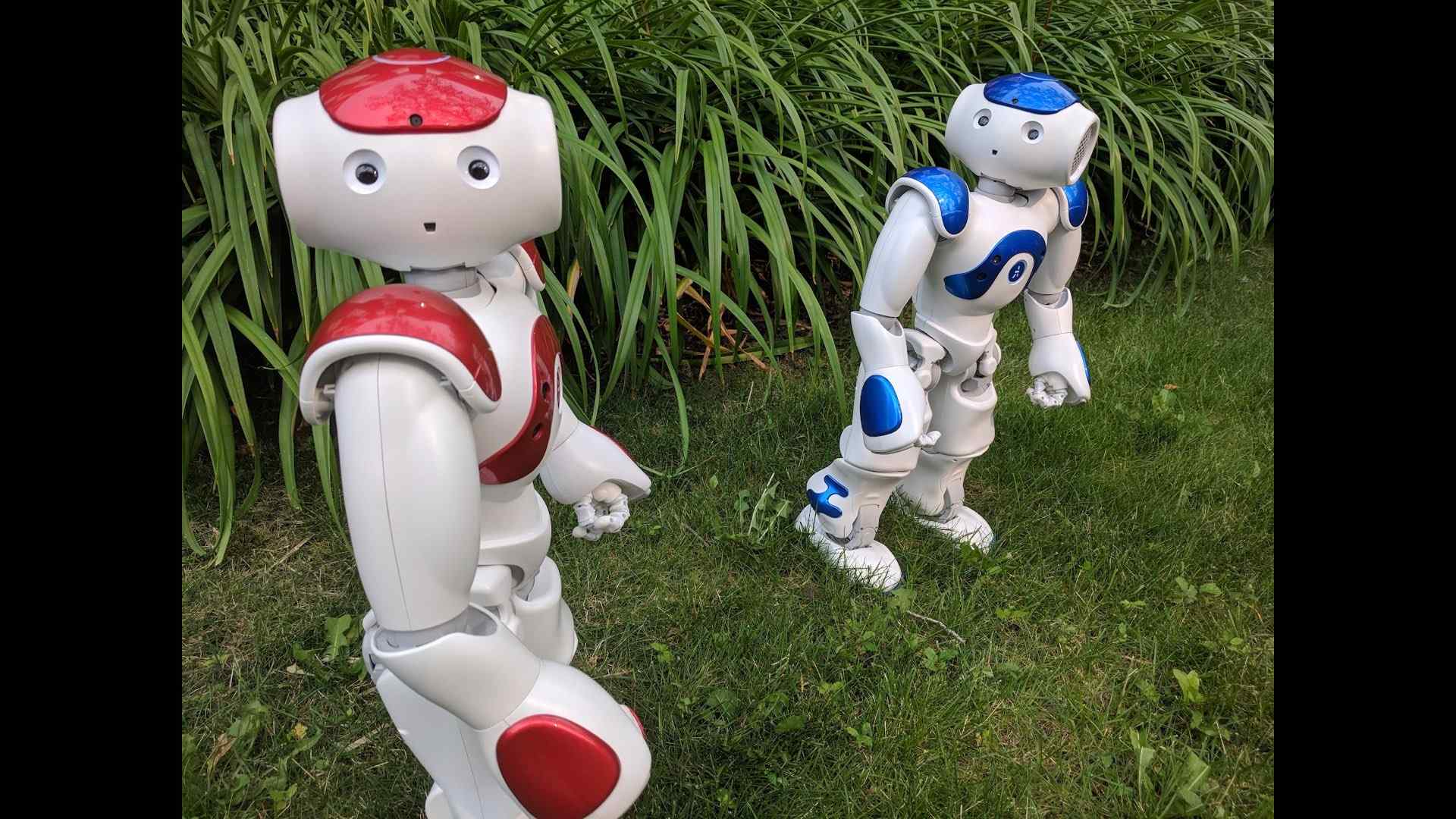

Blueberry and Raspberry (SoftBank Aldebaran Robotics Nao) in HumanMachine at the 2018 Edmonton Fringe as Raspmeo and Blueberiette, YouTube

Because these robots are cute. And we are curious about what they can do.

Piotr Mirowski, Kory Mathewson and A.L.Ex in binary2 (2017) by HumanMachine, photo Ross Gamble

And that is what motivates my work, in putting humans and robotic performers on stage together to create improvised theatre.

In this work, my long time collaborator Piotr Mirowski and robot A.L.Ex. perform a scene as my image is projected from an ocean away.

Piotr Mirowski, Kory Mathewson and A.L.Ex in Artificial Intelligence Imprrovisation (2017) by HumanMachine, Edinburgh Festival Fringe, photo Alessia Pannese

In this photo, Piotr and I perform with A.L.Ex. at the Edinburgh Fringe Festival 2018.

You can see that in these works, the small, non-humanoid robot is a digital puppet, a technological marionette with less body mechanic and more mechanical body.

Alain Rinckhout and A.L.Ex (2020) in Improbotics Flanders Première, photo Peter Theunissen

And the scale of our robot performers means that we can deploy these robotic improvisors around the world, with different performers, and it can even perform in different languages.

For instance, this is Improbotics in Belgium where A.L.Ex. and an improvisor are performing together in Dutch.

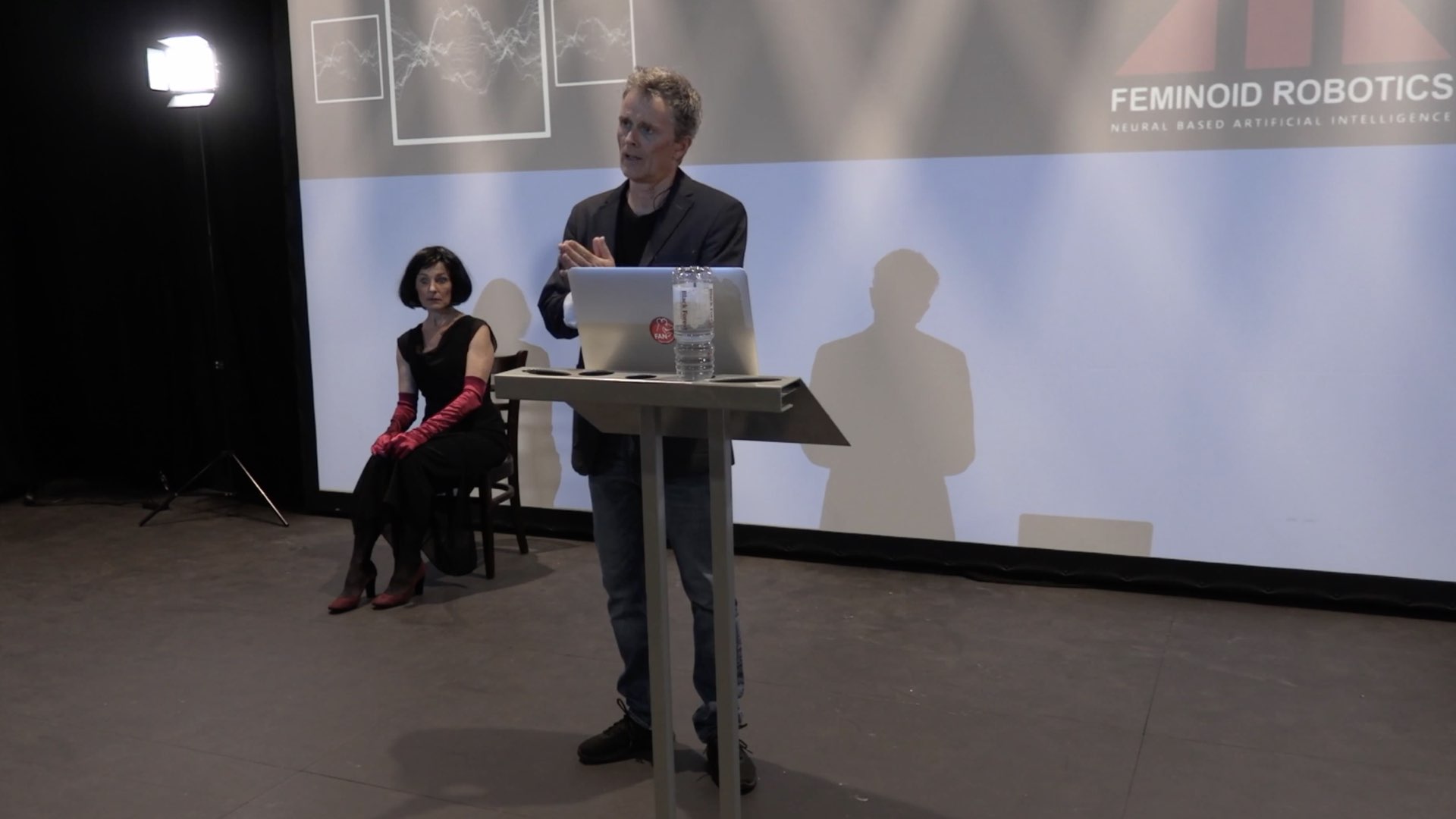

Silicon Woman (2021) from Gunter Lösel and Nike Erichsen.

Our tools have been deployed in many different theatres around the world, including in The Stupid Lover’s & Friends 2021 production of Silicon Woman in which Nike Erichsen playing Olympia2 received and spoke AI-generated pop song lines and along with Gunter Lösel explored the relationship between robot and builder.

Performance of Rosetta Code using the Visual Director by Boyd Branch (2021) by Improbotics, photo Lidia Crisafulli

And my work extends well beyond robots and language models.

Augmented reality is a new tool we are using in our shows for multiple functions: 1) to situate human actors together in digital scenes, and 2) to compose multiple human actors from multiple locations around the world together

Performance of Rosetta Code using VQGAN+CLIP (2021) by Improbotics, photo Erika Hughes

And we can use all sorts of new and interesting machine learning tools, such as generative adversarial networks, or GANs to generate images and videos and build creative expressions that are unique, and novel, and live and in the moment.

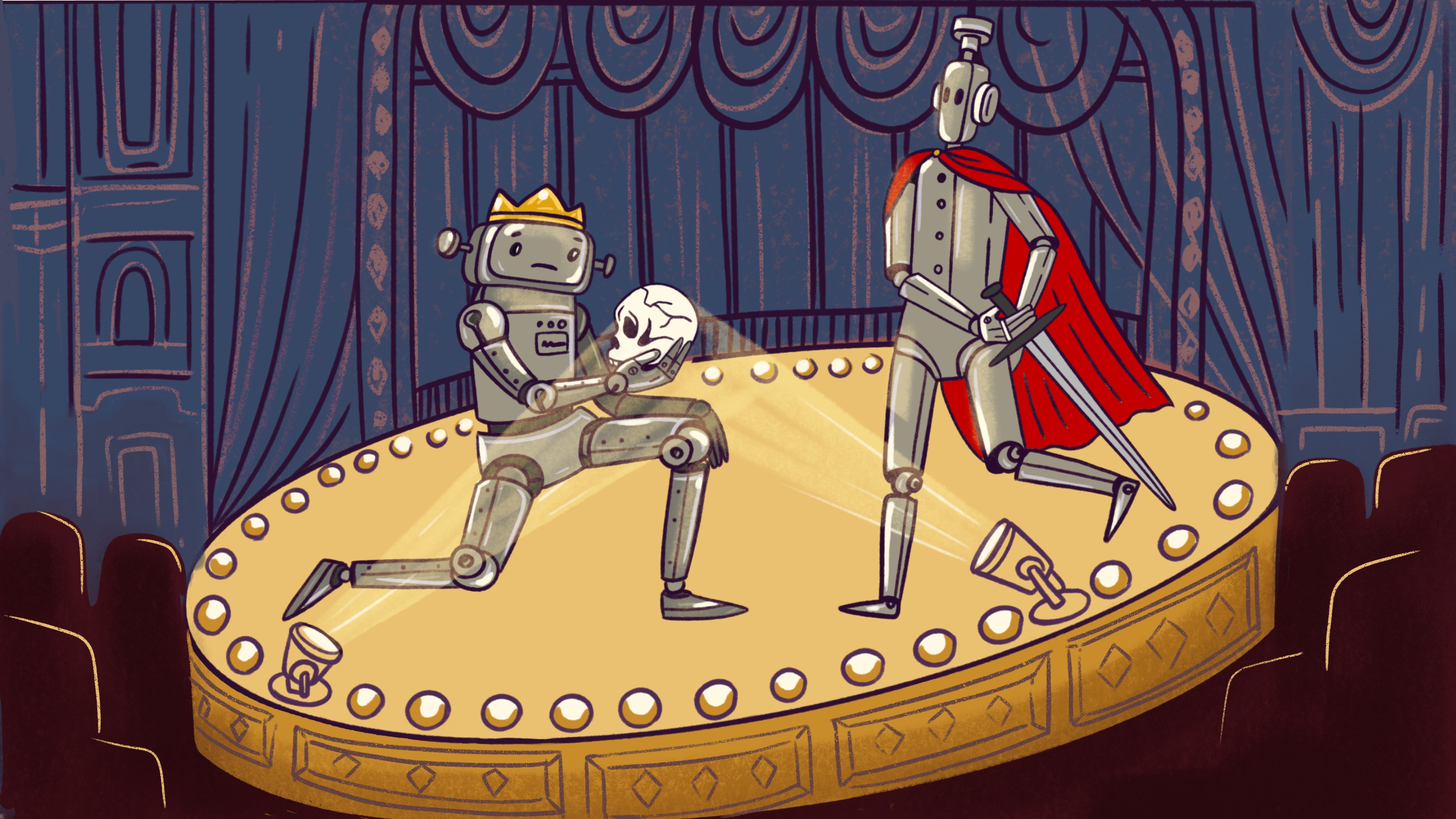

Robots performing on stage.

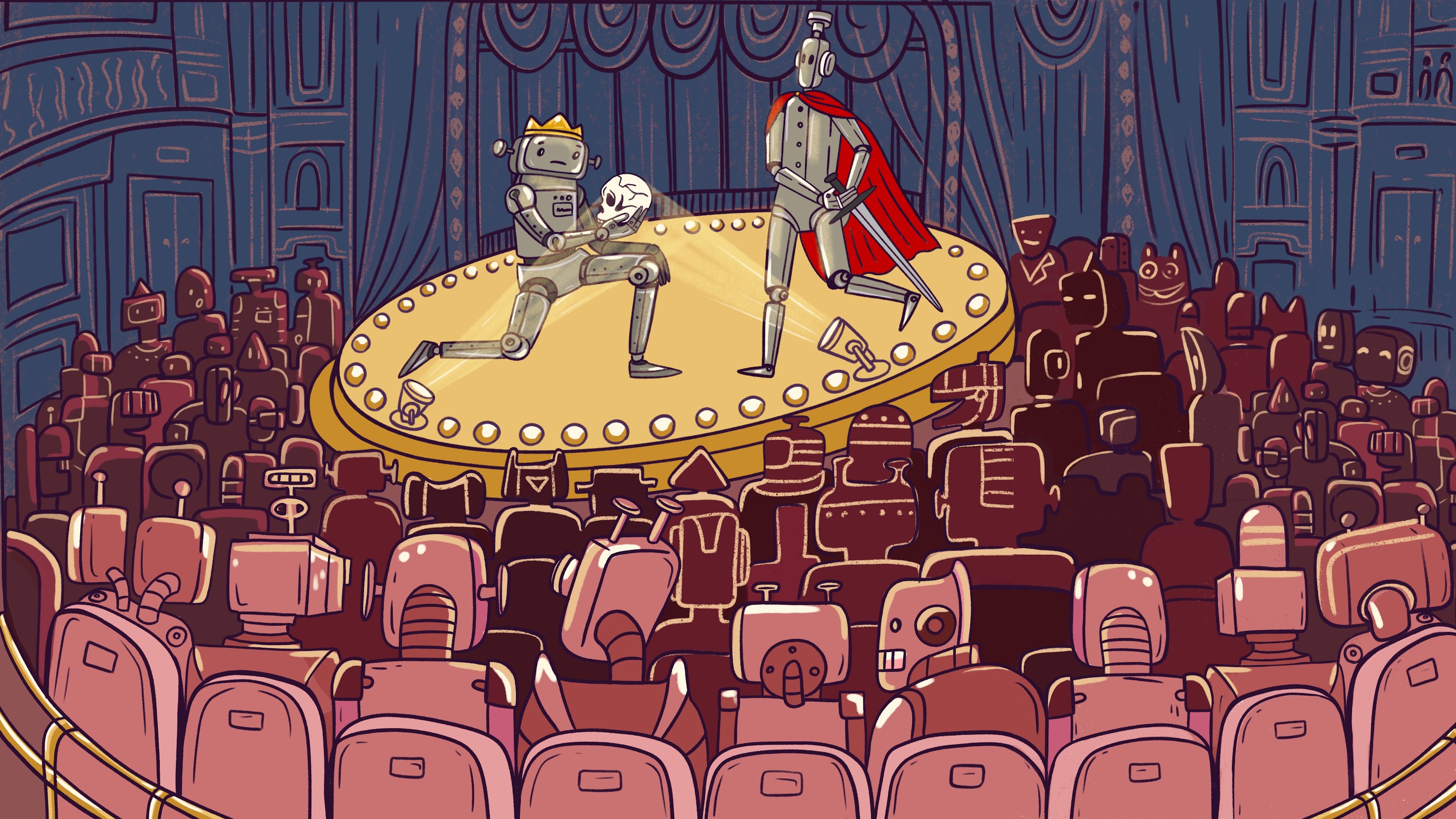

So, after all this, I d like to leave you with a mental image…

I imagine that this might be what it looks like to see the future of live theatre. For us humans to be a fly on the wall and peek into a theatre, and see robots performing live on stage.

Robots performing on stage…

to an audience of robots.

And not only would there be robots on stage, but they would be performing for a room full of robots. The lights and sounds would be completely automated, the entirety of the performance created by digital systems, creating for each other, live and in the moment.

Because, live theatre need not be live at all.

Artificial Intelligence researcher and Improvisation performer, Kory Mathewson and Blueberry the robot, on March 6, 2018.

Photo by John Ulan, © 2018.

FutureTheatre greatly expands the space of possible theatre experiences.

Thank you for reading and for your time. If these ideas resonated, I look forward to connecting with you. Please reach out to continue the discussion @korymath.